By Alejandro Erives

Almost a decade ago on Florida’s Gulf of Mexico coastline, I presented a learning session titled “Seeking the P-F Interval”. I opened that presentation with a quip that we submitted the abstract prior to actually finding the P-F Interval. Today, what I’d like to share is what the value is in understanding that P-F interval.

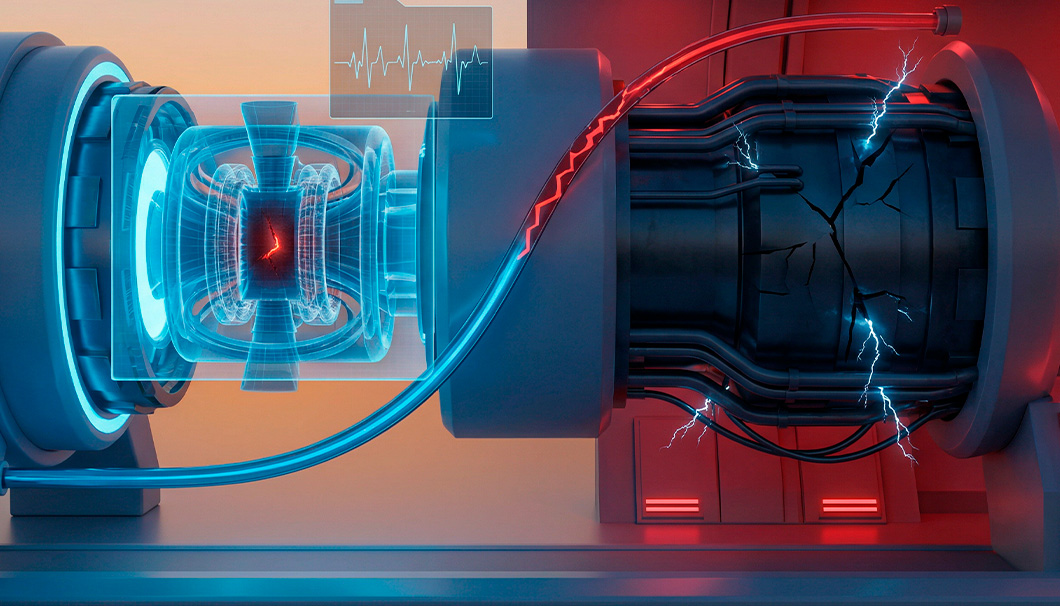

What is the P-F Interval? The P-F Interval is a widely used term / concept in maintenance and reliability circles. In simplest terms, the P-F Interval is the time it takes for a defect to grow from a detectable size to a functional failure. It has been known for some time now that understanding the P-F Interval is critical in determining predictive maintenance inspection frequencies. However, in practice, most programs do not formally quantify this time interval prior to implementing a program.

The goal of predictive maintenance is to alert the equipment owner/user to potential failures (defects) prior to failure and with sufficient time to mitigate the defect or consequences of failure. So, understanding how much warning time an analyst or sensor alert is providing really is the principal factor in how valuable predictive maintenance can be.

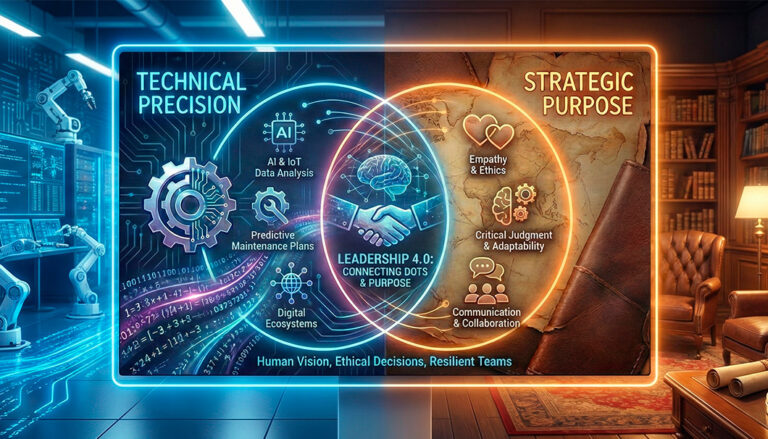

This warning time (i.e. the P-F interval) was considered so important by RCMII author John Moubray that it was part of 3 out of the 4 technical feasibility requirements for applying condition monitoring.

- Is the P-F Interval reasonably consistent? (is it predictable?)

- Is it feasible to inspect often enough to detect the defect within the P-F interval? (Is it detectable?)

- Is it feasible to successfully intervene prior to failure when a defect is detected? (Is it actionable?)

I summarize these 3 requirements as the PDA of PdM (predictable, detectable, and actionable).

When trying to quantify the P-F interval, you will find that there are really three determining factors to the P-F interval:

- Condition monitoring technique/tool/analysis

- Defect (type/application)

- Failure Definition

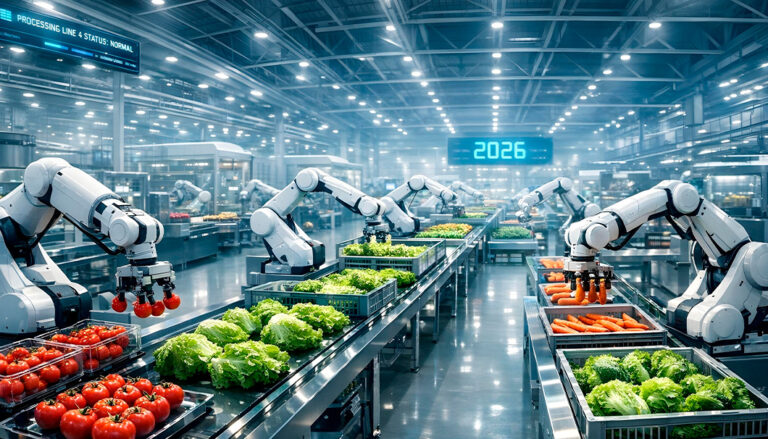

The main reason for doing PdM is to drive some action that you weren’t going to already do anyways. Ideally, that action is a successful maintenance intervention. In addition to successful maintenance, when we start to objectively & statistically analyze the P-F interval, we begin to realize that we are really characterizing the underlying contributing factors that affect the overall P-F interval. That is, we begin to understand how a program works (or doesn’t). This is an often-misunderstood source of value.

When we can describe the P-F interval as a probability distribution, we can achieve value in the following ways:

- Optimize the timing of maintenance interventions on defective assets

This is the most-immediate source of value, as a repair that is scheduled too late likely means failure consequences. - Reduce the number of schedule breaks for maintenance done too early (out of fear)

Repairs or interventions executed too early also usually have operational impacts (to maintenance and production schedules). - Answer the question “Can we make it to our scheduled outage?” (with acceptable risk?)

Often times, optimization is about meeting defined timelines either to meet production goals or satisfying customer expectations, etc. This usually comes with a request from production to maintenance to defer maintenance until a particular date. The old way of answering may have been “it depends” and then rely on the production managers to make the “business decision” (without having objective data to make that decision). Being able to answer this question in the affirmative or provide context on the expected amount of risk the organization will incur if not, is a huge business value. - Determine which assets really do need to be on a continuous monitoring schedule, and which can tolerate less frequent (periodic) manual inspections

Most programs today are built on the whims of a program manager, or perhaps on the outcome of a group-think risk/criticality matrix. These decisions rarely dive into the details of how much risk is being incurred at the asset level due to inadequate P-F intervals. - Improve understanding of how defect severity should affect your maintenance response time

Should a high severity contamination defect on a gearbox be scheduled with the same urgency as a low severity defect on a critical pump’s coupling? Without an objective statistical understanding of these defects, these can be difficult questions to expect your maintenance gatekeepers to manage. - Understand the impact of false positives on the P-F interval

Most facilities don’t actively track or manage false positives in their program. By their nature, they are sometimes hard to document. It degrades the value of the program, but luckily it is one that may show up in the P-F interval distribution. - Assess the accuracy of / uncover gaps in a program’s (analyst’s) severity characterization

Being able to see that those different programs, with different methods for estimating defect severity, result in different P-F Intervals is a novel way of evaluating programs against each other. - Assess the level of warning provided by different technologies

In a similar way to comparing program differences, we can compare the different technologies when looking at similar defect types (e.g. ultrasound vs vibration for pump bearing wear defects, perhaps?)

It is for these reasons, “the why”, that we should care about quantifying the P-F interval.

About the Author

Alejandro Erives is a maintenance, reliability & technical sales leader with experience in refining, heavy industry, and industrial services, specializing in failure analysis, predictive maintenance, and Industry 4.0 solutions. His work focuses on turning reliability theory, especially concepts like the P–F interval, into practical, data-driven actions that reduce risk and improve performance. He is the founder of Blackstart Reliability, drawing on a background in mechanical engineering, data science, and vibration analysis.